By Merlin Carter

First off, this is not a critique of Amazon’s Elastic Beanstalk — it’s a great service that allows you to deploy web applications without having a lot of in-house DevOps expertise. If you’re a young startup looking to deploy your web app on a tight schedule — it’s naturally a tempting choice, but sometimes it’s the wrong choice.

For those in a hurry, I’ll be explaining why Elastic Beanstalk:

- ..is a bad choice if you need worker processes.

- ..isn’t suitable for “mission critical” applications.

- ..isn’t great if you need a lot of environment variables.

Should you be worried about these limitations? The answer really depends on how complex your product is. Let me give you an example.

At Project A, some of our developers were helping one of our portfolio companies, sennder, to scale their IT operations. They had relied heavily on Elastic Beanstalk service to quickly deploy their application. But after our developers joined forces with theirs, everyone quickly noticed that Elastic Beanstalk just wasn’t scalable. To understand the scalability problem, it first helps to understand a bit more about sennder’s product.

A modern digital freight forwarder

The sennder platform offers shippers access to a connected fleet of thousands of trucks. The platform uses proprietary technology to automatically manage cargo loads across this fleet— kind of like a load balancer for freight:

- If you want to freight something across Europe, their platform can automatically find trucks with enough capacity.

- If you’re a small trucking business with spare capacity, their platform helps to ensure that your trucks always have a full load.

The goal is to save money for their shippers while helping carriers make money. They also want to reduce carbon waste by minimizing the number of half-empty trucks on the road.

The platform consists of a front-end hosted in Firebase and a web app deployed in Elastic Beanstalk. The web app relies on several external systems and processes. For example, it communicates with a service that receives and analyzes GPS data from delivery trucks (this data allows shippers to track the process of their freight “tours”). These kinds of transactions must be performed asynchronously because it can take a while to process the data. And therein lies the first problem.

Elastic Beanstalk is a bad choice if you need worker processes

The whole point of a worker process is to perform a task in the background without slowing down your main web app. But Elastic Beanstalk doesn’t support this option in a scalable way.

For example, sennder’s platform relies on separate processes to update and aggregate tracking data. Additionally, users can upload data in large CSV files and have that data processed in the background. However, Elastic Beanstalk is designed to manage multiple instances of the same process — specifically, a single web app.

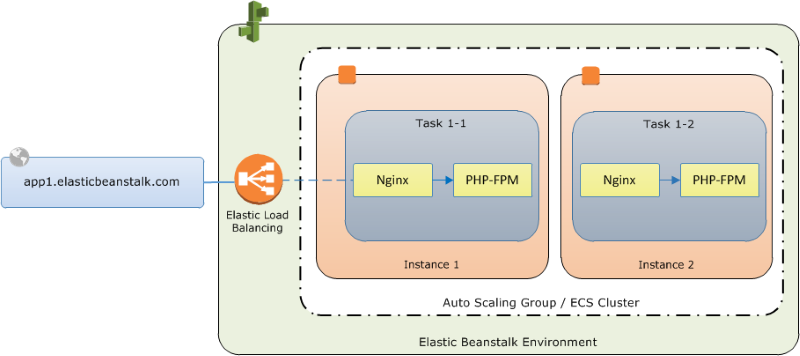

Behind the scenes, Elastic Beanstalk uses Amazon Elastic Container Service (ECS) to scale the resources consumed on the Amazon Compute Cloud (EC2). Take a look at this diagram from Amazon’s documentation:

The diagram is from an article on how to set up a multi-container environment. Note that the “PHP-FPM” container is just an example — you could run your web app in any language. An Nginx installation, however, is mandatory. Elastic Beanstalk uses Nginx as the reverse proxy to map the web app to the load balancer on port 80.

You can see that Elastic Beanstalk supports multiple containers (yellow), but they are all defined in one “task” that is deployed to an ECS container instance. A task is basically an instruction to run specific containers.

The problem is that Elastic Beanstalk abstracts away all the complexity normally associated with managing capacity in EC2. It adds your containers to a task, defines a service, and deploys the tasks to ECS instances that run in an ECS Cluster. You pay for this convenience because Elastic Beanstalk only uses one task or “process,” as I mentioned before.

Let’s compare this with the flexibility of using ECS directly. If you managed a SaaS platform in ECS, you could have multiple types of tasks. For example, “Task A” could run your main web app, and “Task B” and “Task C” could do background work such as processing large file uploads or aggregating data. To scale the workload and manage costs, you could run Task A in many ECS container instances but restrict Tasks B and C to a limited number of instances.

In Elastic Beanstalk, you can’t do this — you’re stuck with “Task A,” which has to run your entire SaaS platform.

Now, there are, of course, workarounds — one option is to run the worker processes in separate threads on the instance where the main web app is running. However, this workaround would make the worker processes difficult to monitor and hard to scale.

If you need more capacity for your web app containers, you could add more instances, but you’ll be duplicating the worker containers as well. This is because all the containers that are required to run your platform are defined in a single task and deployed to each instance. But even if you opted for this workaround, you’ll run into monitoring issues which is why…

Elastic Beanstalk isn’t suitable for “mission-critical” apps

This is because failed deployments are notoriously hard to troubleshoot. If you trawl through Stack Overflow, you’ll find many reports about processes getting terminated without any clear explanation.

Take this quote from an older 2016 Medium article about Elastic Beanstalk:

…overall, we didn’t know what failed, and it’s never a good thing to not be sure that your machine is in a good state…

While the original author had a generally good experience with it, this quote sums up why Elastic Beanstalk is not the best solution for mission-critical applications.

And when I say “mission critical”, I mean an app that should never go down, and if it does, you need to provide a detailed root cause analysis of what went wrong.

For example, I once worked for a company that provided over-the-air software updates to connected cars. Their platform consisted of multiple microservices that ran in a Kubernetes cluster. If an outage prevented a vehicle manufacturer from pushing a hotfix out to their customers, it was a big deal — we had to provide a precise explanation and detail the measures that we would take to improve our infrastructure.

You’re going to find it difficult to provide that kind of audit trail if you use Elastic Beanstalk.

Let me use a real-life example to illustrate why.

The mysterious case of the failed deployment

We had a situation where we were deploying a major update within a specific maintenance window outside normal working hours. The developers needed to take the whole web app down, but we only needed about 10–20mins — or so they thought.

The update in question was a database migration that enabled the platform to support multiple tenants. The developers needed to stop the application temporarily so that there was no interference with the database while it was being migrated. As the web app was redeployed, Elastic Beanstalk reported the stats “OK” for all instances, but for some reason, the complete environment was stuck in a “warning” state. Unfortunately, there are no log files for the entire environment, only individual instances.

But when the developers tried to download logs from the instances to troubleshoot further, they found that they couldn’t. You can’t only download the instance logs when the environment is in an “OK” state — but it wasn’t “OK”, so they were no logs to be had.

Next, they tried some solutions which had worked previously:

- They terminated and restarted the instances (even though they were apparently ”OK”)

- They created a new (but identical) version of the app and redeployed it.

- They rebuilt the entire Elastic Beanstalk environment.

None of this worked.

After 4 hours of research and repeated attempts to fix the environment, they eventually got it running. But to this day, no one knows why the solution worked and what originally went wrong.

And the solution? They simply created a completely new Elastic Beanstalk environment and copied over the same configuration files and environment variables. Speaking of environment variables…

Elastic Beanstalk isn’t great if you need a lot of environment variables

The simple reason is that Elastic Beanstalk has a hard limit of 4KB to store all key-value pairs.

As the platform got more complex, our developers had to add more environment variables, such as API keys and secrets for third-party systems. One day, they ran into this error when trying to deploy:

EnvironmentVariables default value length is greater than 4096Code language: JavaScript (javascript)The environment had accumulated 74 environment variables — a few of them had exceedingly verbose names. They applied some short-term workarounds, such as changing values like “True” and “False” to “1” and “0”, but they needed a better solution. They tried to find out if there was a way of extending this limit. No luck.

As this Stack Overflow answer explains, Elastic Beanstalk uses another Amazon product, AWS CloudFormation, to provision environments. So the 4 KB limit actually comes from CloudFormation, and it’s hardcoded into the system (4KB is the default memory page size of Linux kernels on the x86 architecture). Of course, there are workarounds for this issue. Keys and secrets can consume a lot of your environment variable allowance, so many people suggest using another system to store secrets, but this limit is still a bit annoying. After all, comparable systems, such as Heroku, do not have this limitation.

When one of our developers contacted AWS support about the issue, a support rep basically told us that Elastic Beanstalk wasn’t suitable for our use case and that we should move to a more advanced product.

So there it was, straight from the horse’s mouth. But…

More advanced products need dedicated DevOps expertise

Like I said in the intro, Elastic Beanstalk is great if you don’t have any in-house DevOps expertise. When Project A first invested in sennder, the startup only had a handful of developers and an aggressive product roadmap (like most startups). There was little time to spend on learning a complex deployment system — Elastic Beanstalk was easy enough for the developers to deploy their own code. The problem was they had a hard time fixing things when they broke.

And therein lies the paradox. There might be people who read this article and think, “What? You can find solutions to these problems explained in the documentation…”. But chances are, they have specialist knowledge and know where to look (AWS documentation is vast) plus, the whole selling point of Elastic Beanstalk is that you don’t need specialist knowledge. If you know exactly what Elastic Beanstalk is doing under the hood, you probably don’t need it. You could manage your EC2 instances directly.

After supporting this particular logistics startup for a while, we advised sennder to move away from Elastic Beanstalk in the long term. They even hired a dedicated DevOps engineer to support this process. In fact, last week, our developers helped their team to completely migrate from Elastic Beanstalk to EKS — Amazon’s managed Kubernetes service.

Things have been a lot easier since then — to quote one of the lead developers, “it felt soooooo good to kill EB”.

Conclusion: When is an app not suitable for Elastic Beanstalk?

It all boils down to the complexity of your app and how many background tasks it needs to perform. Elastic Beanstalk should be fine if you’re building a simple app such as a task-tracking tool or an online gift registry.

But let’s say you’re building an app that has to display different layers of geospatial data on a map (position of trucks, traffic flow, estimated arrival). You’re constantly processing files uploaded by carriers, recalculating space availability, providing updated price estimations, and sending notifications to thousands of users.

These are all tasks that need to be performed in the background, in separate processes that don’t impact the performance of the core app and its front end. This is not an app you want to run in an Elastic Beanstalk environment which assumes you are running your app in one main process.

On top of that, there are many moving parts and interactions with third-party services and large sums of money at stake if outages occur. In this case, you don’t want to run into unpredictable issues like exceeding a hard limit on environment variables or an environment that simply refuses to start for no tangible reason.

If all this sounds like anything like a project that you’re planning, do what Amazon told us to do — go for a more advanced product.