By Ronny Shani | Contributing editor: Stephan Schulze

Before writing even a single line of code, Stefanie Engel, Director of Software Engineering, and her former colleague, Zoltan Kincses, focused on the bigger picture: Strategy and execution; information architecture and organization design; and people processes (culture, onboarding, and recruitment).

“We were working closely with one of our portfolio companies and noticed that things weren’t going well. One core problem was not having a clear overview of what wasn’t going well. The company was caught in a typical cycle of complaints and finger-pointing between tech and other departments.

To solve that, we decided to introduce engineering KPIs (Key Performance Indicators) into the development process to make problems visible and achieve progress by encouraging developers to fix their processes”.

This work and experience prompted a unique outcome: Velojiraptor, a Go-based open-source app we developed and use across our portfolio companies, to help teams measure specific KPIs.

That challenge isn’t unique to our portfolio company–many companies suffer from similar problems, pulling the “we can’t measure that” card as an excuse for the lack of a clear business strategy and obscure ownership. “It’s sometimes easier to close your eyes, particularly when the reality might hurt. It’s an industry-wide issue, but setting the correct KPIs and regularly measuring them helps you understand the impact of any technical decision. We can even compare Company A to Company B and analyze why one is doing better or worse.”

So, the first step was to provide visibility and convince various stakeholders that a) we can quantify the problems and b) the numbers we generate are significant — they’re invaluable for improving the efficiency of the engineering organization.

When we didn’t find a tool that allowed us to implement a good measurement framework, we developed it ourselves.

Can you tell us about this tool and the framework behind it?

Zoltan: Velojiraptor pulls data from Jira and determines how long tickets stay in different states until they are finally closed.

We can do that for specific ticket types (such as bugs), but the most important thing is that we can calculate the average lead time to identify flaws in the development cycle. That’s a feature that Jira, unfortunately, doesn’t provide out of the box.

We focus on tickets because they represent tasks and have a history: We know their type, context, dates, and status. That’s why an issue-tracking tool like Jira is vital.

Our metrics were inspired by DORA (DevOps Research and Assessment), which is less about developer productivity and more about getting an indication of the speed and quality of the changes your team ships.

We focused on three KPIs:

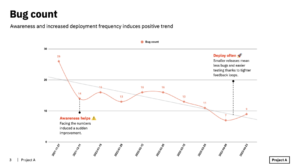

- Number of bugs

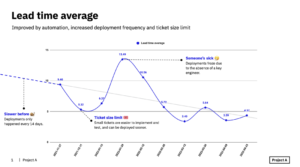

- Lead time

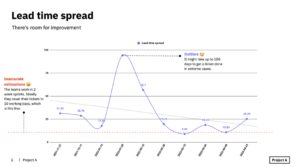

- Lead time spread

Number of bugs is straightforward and clear. The fewer open bugs you have in your systems, the better the quality and the value for your users.

Lead time is an interesting parameter, which you can also communicate to non-technical stakeholders. We measure it by calculating how long it takes for tickets to complete the journey from the To-do list to the Done list. In other words, how frequently do you deliver results to your users?

This data is valuable not only from an engineering perspective. As we strive to optimize the flow of work and ship changes fast, reviewing lead time and investigating how much time tickets stay in a specific status helps identify blockers.

You can, for example, see that tickets remain a minimum of 2 days in Code Review, which is something you can work on with your developers.

When we started, the company had a 16-day lead time. Now they stand at 3.5–4 days, which is an excellent result for them.

The third factor is the lead time spread, which shows the difference between the minimum and maximum lead time during a specific period. For example, a spread value of 30 means that the shortest ticket took one day to deliver, while the longest required 31 days.

These margins leave some wiggle room for the product manager to improve various aspects, like ticket sizes, better align with the stakeholders, and optimize the flow because the lower the spread, the more predictable and reliable the delivery, leading to a better experience for stakeholders.

(1) A high Bug count can indicate poor testing and large deployments, which result in wasted time and unpredictable delivery times.

(2) A high Lead time average can signal invalid processes or deployment difficulties, resulting in slow delivery and often more bugs.

(3) A high Lead time spread may point to a single point of failure (e.g., a person’s absence). It can also indicate poorly implemented features, which require significantly more effort to deliver. The results are inaccurate estimations and unpredictable delivery times.

Metrics shape behavior

Several tools enable teams to get the data they need to improve speed and quality, including Athenian, SmartBear, and Sleuth. While it isn’t as polished, VeloJiraptor is simple and provides information that helps detect sub-optimal development processes by pinpointing the source of the problem.

You can see where time is wasted and implement a checklist of best practices:

- Automate as much of the code review, testing, and deployments, so people don’t become bottlenecks. Simplified workflows increase confidence and optimize human effort.

- Reduce the chunks of work. Instead of delivering one big change a person works on for weeks, deliver small changes rapidly.

- Deploy often. A smaller set of changes allows fewer bugs to slip through the cracks, resulting in faster cycles and higher quality.

- Smaller deployments make for better apps. You get fewer bugs and quicker, more frequent releases (either new features or bug fixes).

- Deliver incrementally. Strategic, staged rollouts limit the negative business impact and allow you to provide value to users way earlier.

Whichever tool you choose, it’s important to remember that what you decide to measure will determine what’s important to your team.

A group of researchers considers this measurement to be only a part of a holistic developer productivity framework dubbed SPACE: Satisfaction and well-being, Performance, Activity, Communication and collaboration, and Efficiency and flow (that’s where Velojiraptor fits).

“Developer productivity is necessary not just to improve engineering outcomes, but also to ensure the well-being and satisfaction of developers, as productivity and satisfaction are intricately connected”.

Zoltan: “You should encourage people to review and reflect on the data. That’s why you need to cater to both groups — leads and developers.

How do you make this data accessible and valuable? You provide actionable recommendations, ensure you don’t waste managers’ time, and offer a good developer experience: An engineer wouldn’t start digging around the data unless it relates to their current work. What’s relevant for them is how their work contributed to the project. In that case, it might be better to send a Slack message saying, ‘that’s how the team did the past week. How do you feel about this? Does it make you happy, or do you need to improve something?’

The next step would be a retrospective meeting: Sit down, look at the data, and analyze it — ‘our lead time was up. Why do you think that is?’ That’s something that makes these numbers relevant for the entire team. They can have insights and come up with ways to improve performance.

Stefanie: “But it’s not just about the numbers. It also helps to collect feedback from the dev team. It can be a simple Google Form asking about their daily assignments and how much they like or dislike certain aspects. After applying changes, you re-evaluate their satisfaction rate”.

How did they cut down bugs and lead time?

Zoltan: “We sat down with the people responsible and talked about their desired goal. Their lead time target, for example, was five days (instead of 31 days when we started), and now it’s 3.5 days, so they’re doing much better.

Awareness and visibility are great motivators. When people became aware of the data, they started implementing changes and saw that the curve was trending. Then they just flowed with it to see how far they could push it. It’s an organic way to motivate people: We started measuring, and it influenced people”.

saying, “We can’t measure this”, is usually an excuse that pops up when there isn’t a clear business strategy

Stefanie: “This only works if teams use a tracking tool (Jira, Trello, GitHub Issues, etc.) and update the ticket status, but that’s also how you motivate them: Once you see that your tickets stay on the To-do for four weeks when you actually completed them two weeks ago, you’ll be more mindful about updating their status next time.

It’s about communicating, having good numbers to show, and gamification: ‘This week we’re standing on 10 — let’s try to get it down to eight next week’”.

Zoom in on engineering

We can classify software development KPIs into two categories:

- Engineering organization KPIs — lead time, cycle time, bugs, etc. These are generally indicators of the engineering organization’s health and can help detect problems: Slow deployments, poor QA, and complex processes. The stakeholders responsible for this are the engineering managers and management, who focus on guaranteeing users benefit from the development work as quickly as possible.

- Business KPIs — a good example is conversion rate. For example, a team developing an online shop can reduce the release bounce rate by implementing clever changes, like better address validation. The stakeholders can include developers, the engineering manager, the product manager, the warehouse staff, and the management. All of them are interested in optimizing business processes, which can save money across the board, leading to higher margins and revenue.

Focusing on the engineering organization rather than the high-level KPIs related to the whole company allowed the team to “ignore” broader scopes–including product, design, and other related processes–and prevent potential internal resistance.

Zoltan: “Our process is incremental: We don’t expose the big procedural problems, which people don’t always want to tackle (or even admit they exist). We measure progress in smaller chunks and show it contributes to optimizing other areas.

We do the same thing that we recommend any tech organization does to optimize their product: Measure and analyze the data, make small improvements and deliver them quickly, repeat”.

Stefanie: “A data-driven company should have access to as many data points as possible. It should be transparent.

Since the business value is almost always affected by the quality of the engineering team, these KPIs provide a lot of insight: Engineering KPIs contribute to business KPIs, and our tool makes it easy to access this data”.

Call for contributors: VeloJiraptor++

Velojiraptor is an open-source app (MIT) developed by Zoltan Kincses and maintained by the Project A Tech team. You’re welcome to use it and even more welcome to help us improve and develop it further.

Need some inspiration? Here are a few suggestions and feature requests: