By Ha Duong

The field of computer vision has made significant progress over the last couple of years. Since 2012, when a team led by AI researcher Geoffrey Hinton achieved a dramatic breakthrough in the ImageNet Challenge, deep learning has gone through a major revolution. Neural networks of all kinds and architectures have enabled software to accomplish tasks that surpass human-level accuracy in various areas such as object classification and semantic segmentation in images as well as action recognition and object tracking in videos.

Today, machines learn to see and perceive the world

However, most of these scientific advancements have not made it into applied real-world use cases. The challenging prerequisite of having access to the right datasets, enough computing power, and high-quality ML talent has limited the capabilities of computer vision (CV) mainly to the tech giants and a few selected OEMs and Tier-1 suppliers in the automotive and robotics industries. For the world to make further leaps in this field and benefit from the potential of automation, we can’t rely on a few key players only which are incentivized to reap the fruits of their successes in monopoly prices. We are big advocates for decentralization, and we believe that we need to find a way to democratize access to computer vision capabilities by reducing the production cost of CV applications.

We need to create an affordable, accurate, secure, and robust perception layer for machines

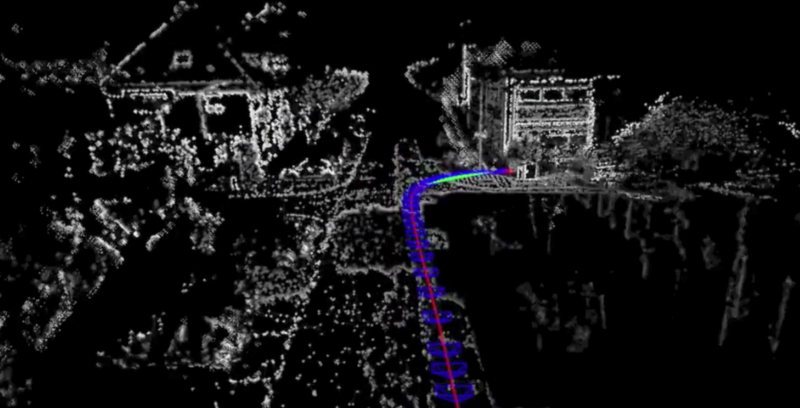

All sorts of physical autonomous agents such as cars, drones, and industrial robots would require a means of “vision” to interact with and navigate in uncertain and highly dynamic environments in the real world. The current standard of LiDAR technology for autonomous driving is highly expensive and not suitable for scale. SLAM technology has emerged as an affordable camera-based solution to record the surroundings and localize the agent within it.

Once autonomous agents need to interact with each other in a dynamically changing environment, complexity increases a lot. An autonomous fleet operating in real world environments needs a real-time 3D map for navigation and path planning purposes. Real-time 3D reconstruction (mapping) is required for autonomous driving and robotics use cases, but current 2D mapping players only provide static maps that are either inaccurate or not compatible. Furthermore, existing solutions cannot provide automated Semantic Segmentation, resulting in the need for manual annotation and labeling activities that consume time and money.

“Dynamic 3D Mapping that requires a highly precise localization to ensure secure navigation will be indispensable for self-driving vehicles of all kind, no matter if it’s about cars, logistics vehicles, or robots for delivery”

— Prof. Dr. Daniel Cremers

Artisense enables vehicles and infrastructure to collectively see, learn, collaborate, and exchange map and localization data in real-time which enables autonomous agents to navigate and interact safely — creating the perception layer for machines.

Artisense is a vertically integrated full-stack computer vision software company commercializing proprietary Visual SLAM and deep learning technology for applications in the fields of automotive, drones, robotics, and manufacturing.

The team leverages off-the-shelf cameras as affordable commodity hardware and enhances their capabilities with world-class Visual SLAM software to perform precise positioning and orientation tasks for navigation in unknown environments. This can replace expensive LiDAR or buggy GPS systems with cameras which receive regular over-the-air updates. Their spatial intelligence software can track the coordinates of moving objects and compute time-series predictions of their movements.

Dynamic Global Map

Data generated by the sensors is then used to crowdsource a dynamic, globally consistent, and synchronized 3D map that resembles topological structures of the environment. The dynamic global map will include moving objects which are rapidly updated with new spatial information. Artisense is creating the leading technology and data standard to scale up map creation. The team works together with vehicle owners, fleet operators, OEMs, and mobility providers who install the stereo vision sensors and contribute to the global map. These partners are economically incentivized to provide access to their data in order to enable their own autonomy services or run data analytics. This also creates opportunities for new business models that monetize recorded vehicle sensor data.

Flexible and Efficient Technology

Accurate Visual SLAM technology is a requirement for creating this global map. Simultaneous localization and mapping is the problem when hardware needs to localize itself, understand its surroundings, and navigate in an unknown space. Artisense’s team around Prof. Dr. Daniel Cremers (AD researcher, Leibniz Award holder, more than 17k citations according to Google Scholar) advanced SLAM technology enough to enable accurate 3D maps. It is important to emphasize how their platform is sensor-agnostic and that a pure camera-based solution is only the affordable initial solution offered. We envision further improvements in the future powered by complementary sensors (RGB & depth sensors, inertial measurement units, and even LiDAR, GPS, event cameras, etc.) and sensor fusion which improve the global map even more. Map data can then be further enhanced with deep learning for sensor pose estimation, object recognition or semantic labeling of dense scene maps.

The beauty in this is that Artisense’s software is compatible with different combinations of sensors or cameras. It is a low-cost solution that doesn’t rely on GPUs since it requires a fraction of the computing power of other methods. Also, only light-weight data sets are created which can be easily transferred with limited network bandwidth. The data is encrypted and enjoys high standards for IT-Security and data authentication. Artisense enables real and safe autonomy use cases and can be further integrated into a SoC or ASIC.

Use Cases

The spectrum of possible use cases ranges from industrial to daily mobility scenarios. With obstacle avoidance and path planning abilities, robots will be able to perform intelligent long-term scene interactions. Delivery robots in and outside closed warehouse environments can be produced more cheaply by replacing expensive laser-based perception systems. In urban areas, drones can be more autonomously navigated and coordinated individually or in fleets. Autonomous driving and ADAS capabilities and traffic management in smart cities can be further improved to predict better and prevent accidents. In factories, product assembly control and error detection systems are enhanced. Industrial manufacturing robots can be programmed dynamically instead of statically, enabling collaborative robotics with interactions between humans and co-bots.

Artisense is already working on a few of these use cases together with enterprise partners in the mobility and robotics spaces. The team has a strong link to business and research and draws great talent while executing on product and strategic milestones. We have been impressed by the progress the team has made in the short time since we initially talked to them.

At Project A, we believe in the long-term vision of Artisense. We see SLAM technology as an essential enabler for spatial computing and physical autonomous agents. We want to invest in the development of this technology as it’s only at the beginning of its full potential. The dynamic 3D global map opportunity can create a new multi-billion dollar industry and act as the underlying infrastructure for smart cities and autonomous systems and fleets. In the long-term, this map data can evolve into a utility which is traded via map data marketplaces and used to train machine learning applications.

We are happy to partner up with Artisense and our co-investor Vito Ventures in this financing round. We wish Andrej, Daniel, Till, and the rest of team all the best and are looking forward to witnessing and being part of their accomplishments.

References

Davison, A. J., Reid, I. D., Molton, N. D., & Stasse, O. (2007). MonoSLAM: Real-time single camera SLAM. IEEE transactions on pattern analysis and machine intelligence, 29(6), 1052–1067.

Engel, J., Koltun, V., Cremers, D. (2018). Direct sparse odometry. IEEE Transactions on Pattern Analysis and Machine Intelligence, 40(3), 611–625.

Engel, J., Schöps, T., and Cremers, D. (2014). LSD-SLAM: Large-scale direct monocular SLAM. European Conference on Computer Vision, 834–849, Springer, Cham.

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). Imagenet classification with deep convolutional neural networks. In Advances in neural information processing systems, 1097–1105.